In-Context Learning and the Limits of Prompting

Lecture 3.1 covered the mechanics of API calls. Lecture 3.2 surveyed the model landscape. Lecture 3.3 addressed generation parameters. This lecture turns to a more fundamental question: how do you teach an LLM to behave the way you want? The answer is in-context learning — and understanding its limits leads directly to the concept that defines the rest of this course: context engineering.

In-Context Learning

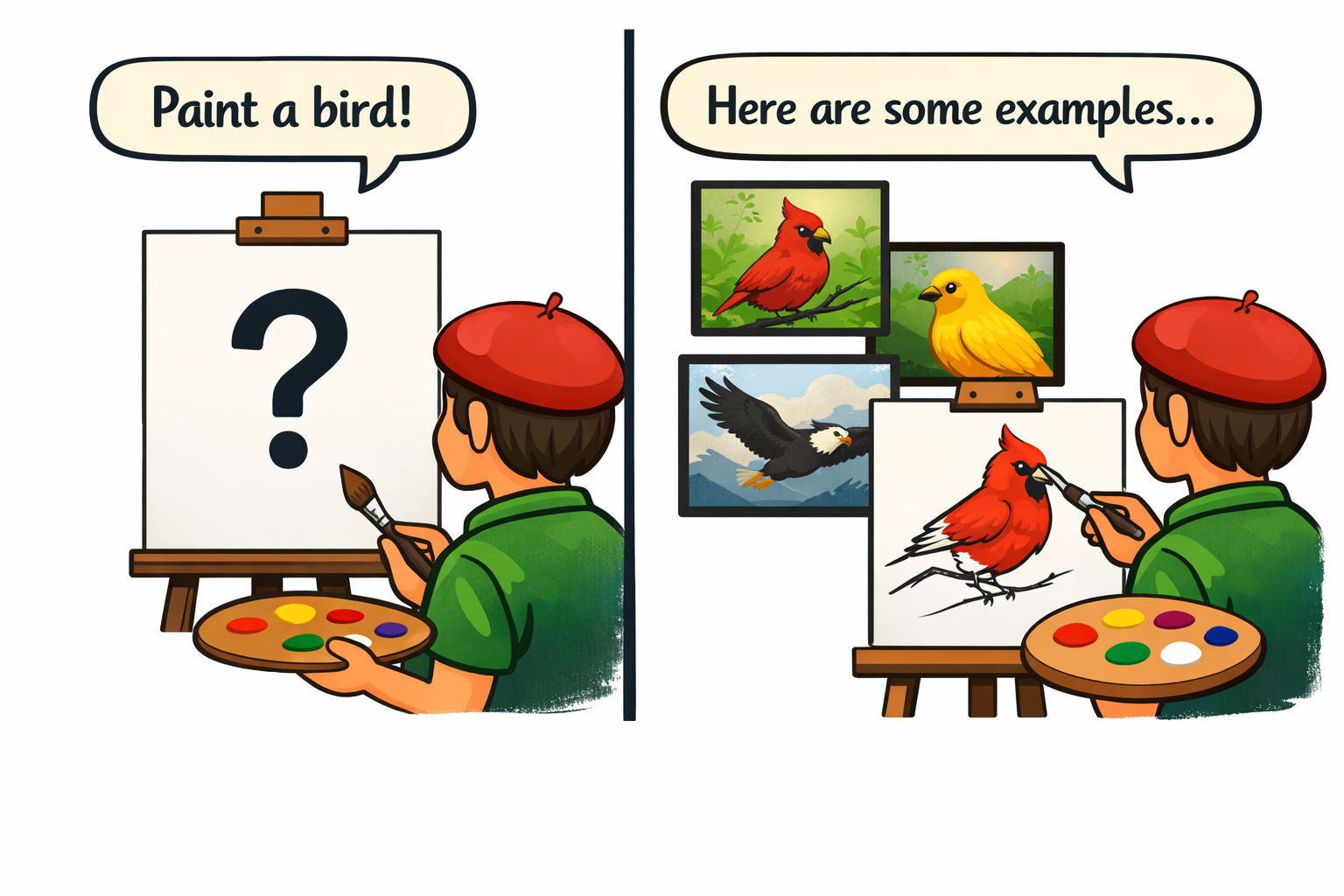

In-context learning is the ability to teach an LLM a new pattern by showing it examples in the prompt. The model's weights do not change. There is no retraining or fine-tuning. The model sees the examples in its context and applies the pattern to new inputs.

This is one of the most practical capabilities available to agent developers. You can customize model behavior instantly, without a machine learning pipeline, simply by providing good examples.

Zero-Shot Prompting

Zero-shot prompting means giving the model an instruction with no examples:

Classify the sentiment of this review as positive, negative, or neutral:

"The food was decent but the service was painfully slow."

The model relies entirely on its training data to interpret the task and produce a response. For straightforward tasks like sentiment classification, modern frontier models handle this well. But two problems arise. First, the model may format its answer unpredictably — returning a full sentence like "The sentiment is negative" instead of a single label. Second, for tasks that require more nuance or domain-specific judgment, zero-shot accuracy degrades because the model has nothing to calibrate against except its pre-training.

Few-Shot Prompting

Few-shot prompting adds examples before the task:

Classify the sentiment of each review:

Review: "Absolutely loved it, best meal I've had in years!"

Sentiment: positive

Review: "It was fine. Nothing special."

Sentiment: neutral

Review: "The food was decent but the service was painfully slow."

Sentiment:

The model learns two things from these examples: what the correct classification looks like, and how to format the response. With the examples above, the model will almost certainly respond with a single word — matching the pattern it was shown. No training required.

The zero_vs_few_shot.py script demonstrates this directly. It classifies the same set of reviews using both approaches and prints the results side by side. Zero-shot responses may vary in format — full sentences, explanations, inconsistent labels. Few-shot responses are consistently single words matching the demonstrated pattern.

Why This Matters for Agents

For agents, output format matters as much as correctness. Agent code must parse LLM responses — extracting tool names, arguments, decisions. If the output format is unpredictable, parsing breaks. Few-shot prompting solves this by teaching the model your exact format through examples.

Beyond format consistency, in-context learning enables several practical capabilities:

- Teaching tool usage by showing example tool calls in the system prompt

- Establishing coding style by including example code

- Defining output formats by demonstrating them

- Correcting specific behaviors by showing the right approach alongside the wrong one

The trade-off is token cost. Every example consumes tokens, and those tokens are present in every API call. Three examples at 50 tokens each adds 150 tokens permanently to the system prompt. For agents running in long loops with many API calls, this cost compounds. The practical guidance is to find the minimum number of examples that produces reliable behavior — typically two to five — and stop there.

When Prompting Hits Its Limits

In-context learning and careful prompting can accomplish a great deal, but there are hard limits that agent developers inevitably encounter.

Context Rot

As context grows, the model's ability to attend to relevant information degrades. In a simple chat, this might mean the model forgets something from 30 messages ago. For an agent running in a loop, the problem is more severe.

Consider what happens during a typical agent task:

- The agent reads a file — 500 tokens added to context

- The agent reads another file — 500 more tokens

- The agent edits a file — both the old content and new content are in context

- The agent reads the file again to verify — another 500 tokens

- After 10 tool calls, there may be 5,000+ tokens of tool results that are no longer relevant

The context fills with historical information that was useful at the time but is now noise. The model must attend to all of it, and its attention is spread thinner with each addition. Quality does not degrade gradually — at some point, the model starts making mistakes it would not have made with a clean context.

This is especially dangerous when combined with an inflated system prompt. If few-shot examples push the system prompt to thousands of tokens, and tool results add thousands more, the model can hit the point of diminishing returns quickly. Beyond roughly 80% of the context window, output quality typically begins to deteriorate.

Conflicting Instructions

As system prompts grow longer and more detailed, instructions can subtly contradict each other. "Be concise" and "always explain your reasoning" pull in opposite directions. The model does not resolve contradictions — it statistically averages them, producing inconsistent behavior that is difficult to debug.

The Single-Context Constraint

Everything must fit in one context window. You cannot split a task across multiple contexts and have the model maintain coherence. Multi-agent systems work around this limitation, but that is a later topic.

From Prompt Engineering to Context Engineering

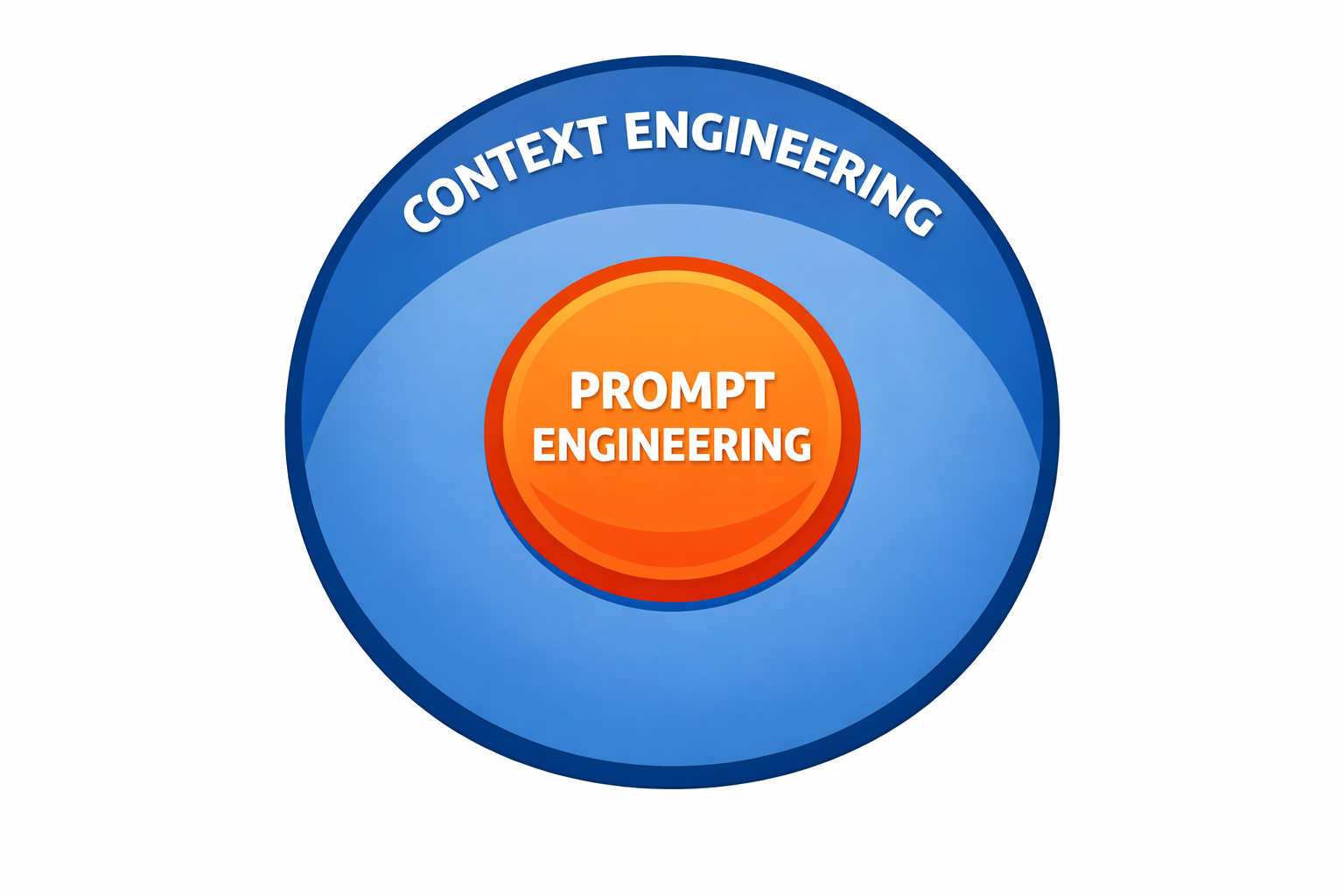

The field has shifted from "prompt engineering" — how to write a better prompt — to "context engineering." The distinction matters.

Prompt engineering asks: "How do I write a better prompt?"

Context engineering asks: "How do I curate the entire context state — system prompt, conversation history, tool results, external data — so the model has exactly what it needs and nothing it doesn't?"

Prompt engineering is a subset of context engineering. The system prompt is one piece of the context. But for agents, the system prompt might be 5% of the total context. The other 95% is conversation history, tool results, and accumulated data — and that is where output quality lives or dies.

The Context Engineering Mindset

Thinking in terms of context engineering changes the questions you ask:

- Not "how do I phrase this instruction?" but "what information does the model need right now?"

- Not "how do I make the prompt longer?" but "how do I keep the context small and high-signal?"

- Not "why didn't the model follow my instruction?" but "what else is in the context that's competing for attention?"

It also means managing the full lifecycle of information in the context:

- What enters — tool results, messages, examples

- What stays — which messages to preserve, which to drop

- What leaves — summarization, truncation, compaction

- What gets priority — beginning and end of context receive more attention than the middle

Context curation is a programmatic task. You can remove stale information, prioritize placement, inject relevant data based on the current query, and use smaller models (like Haiku) to summarize old messages or select between system prompt variants. This metaprogramming — using LLMs to manage the context of other LLM calls — is a core pattern in agent development.

The Central Discipline

Context engineering — curating the smallest possible set of high-signal tokens — is the central discipline of agent development. Everything built from this point forward in the course — context management strategies, RAG, memory systems, skills, multi-agent architectures — is a context engineering technique. Each addresses a different aspect of the same problem: ensuring the model has exactly the information it needs to make its next decision, and nothing more.

Module 3 in Review

This module moved from theory to practice:

- Lecture 3.1 — anatomy of an API call, message roles, conversation history, error handling

- Lecture 3.2 — the model landscape, tiers, costs, local models

- Lecture 3.3 — temperature, sampling parameters, output control

- Lecture 3.4 — in-context learning, context rot, context engineering

The next module goes deeper into context windows — measuring them, managing them, and designing tools that keep them small.